Your Bias Is Served The Illusion of Detecting LLMs

The Allure of the Red Gauge

AI detectors are selling you an illusion. Behind the authoritative percentages, they are only detecting patterns.

From the claim of up to "99.99% accuracy" to an oddly satisfying speedometer-style red gauge flagging "100% confidence", the appeal is real. It brings the satisfaction of displaying a score to a population raised on grades, and ultimately fulfills the desire for uniqueness most people crave; tales of old that do not want to let go, yet they have to.

I have been working for the last decade on this meaningless-and-not-defined-mess-of-words that society calls artificial intelligence, trying to develop traceability in computer-assisted debate before it was even the cool kid in the room, and have been wearing the privileged title of "Senior Expert on AI" within the NATO Strategic Communications Centre of Excellence. Now that the ethos is set, I know what these detectors really are, but I'm a curious man and jumped in for more.

Putting "99% Accuracy" to the Test

So, I copied and pasted the original second paragraph of this paper1 into one of these AI detection tools that boasts 99% accuracy and 17 million users. According to its front page, it is supported by the BBC, The New York Times, and other heavyweights.

Besides the fact that we apparently share a common passion for Aristotle, considering their front page is a textbook execution of the rhetorical triangle to sell you an illusion, the answer was without appeal:

Chance this entire text is...reveals AI(29%), Human(71%), and "Mixed(0%).

Too bad, because I asked my self-hosted mistral:7b LLM (a ChatGPT alternative installed on my computer) if I had made mistakes in it, and the only thing I took from it was to add a semi-colon.

I'm a man of science, and with my very useful PhD in mechanical engineering, I did the math: there are 305 characters in that second paragraph. Which means the generated content was 1 character out of 305. That is an astounding ~0.3% generated content. Of course, what that percentage really means is how closely this text matches the patterns of mainstream LLMs, but those LLMs are trained on human production, including some of our best.

In mathematics, we have this concept called a "counterexample". I'll spare you the formal logic expression, but if the claim was 100%, I could just say that my example is a valid refutation and call it a day. For those who see where it goes, it should be enough and this perspective post should sign a "FIN". But the brain has its way of avoiding logic; it does because survival required for us to think fast, and we now carry these that we call biases.

The Illusion of Certainty

As a data scientist, I know 99% accuracy is often a 1% failure rate in disguise.

Let's play devil's advocate and pretend this 99% was achieved on a totally random and foolproof dataset. A 1% error rate on 50,000 students is still around 500 innocent people falsely flagged. That is 500 reputations jeopardized by a "statistically acceptable" margin of error, the walk of shame. Sadly, there’s just no authority yet to say when these claims cross the line into being misleading.

But that is just the hypothetical. The reality is that this 99% is most probably not invented, but what they don't tell you is that they select the data they want to run the test on, rendering the confidence level biased and meaningless. And when their tool inevitably flags your perfectly human-written text? They can simply hide behind their own statistics. While repeatedly testing your own writing should theoretically lower the odds of a false positive, probability is heartless. The company can simply say you were unlucky and hit that 1% error every single time. If you enjoy the illusionist show, you know the magician masters the context which puts you into the entertainment seat, and you accept that it's not real.

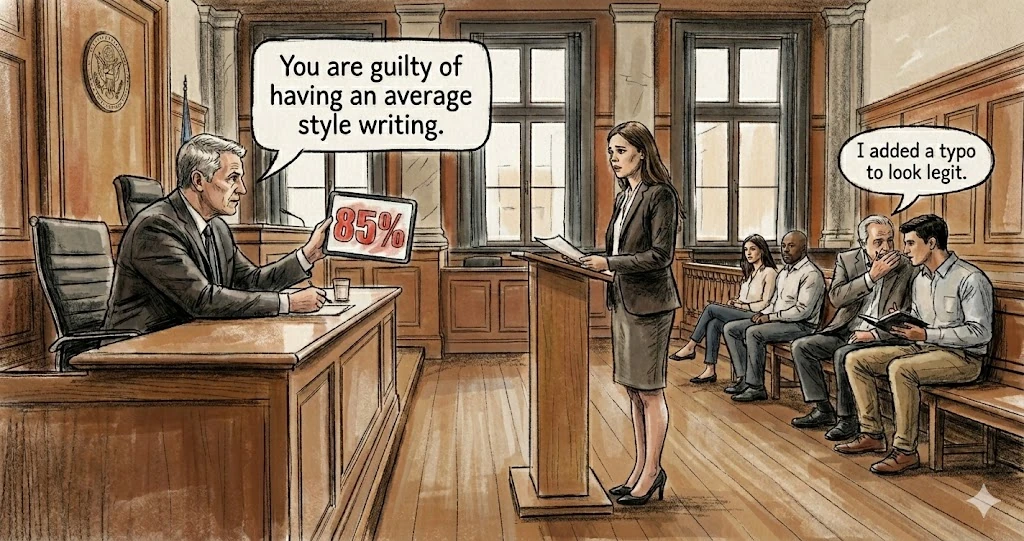

Victim or illusionist, the reason these tools thrive is because of confirmation bias. We need them to work, otherwise how can we identify authorship, a concept carried for centuries? Because we need it, we take a number on a screen (75%, 85%, 90%) and it validates our instinct. An instinct that was never based on formal logic anyway, but on jumping to conclusions, a trait we evolved to survive.

But wait, all these posts on LinkedIn, they are so similar to the output of ChatGPT, they must have used it!

To that, you are probably right. The only thing it means is that you detect the default output of a set of publicly known AI tools (LLMs), you detect a pattern, not AI. Even then, how could you train a detection model on a private model, a secret state model, or simply a model trained by an expert on his own computer? You can't.

Even in the highly improbable case where their detection models would make sense, how can you definitively prove a human didn't write a generic-sounding text unless you were sitting right next to them? Because communicating in patterns is also the thing of Homo sapiens, it cannot be a proof of exclusivity. At most a maybe, but you don't judge on a maybe, at least not in the EU we live in.

Why the Illusion Matters

Why does this illusion matter? Because we cannot afford distractions.

Some of us are currently obsessed with "catching" ChatGPT, but that is a sideshow. All of that would be fine to me if we were not living in a time where malign foreign entities are trying to dismantle what has been built: a unified Europe after centuries of wars. AI requires a high skillset to be used; it requires maths, IT, and deep domain knowledge. If you don't have this skillset in your team, you are more probably building an illusion of achievement until processes and governance are properly established, or until your luck runs out. But I'm not here to judge; I'm here to contribute like many to the EU's future.

LLMs are here to stay, and trying to detect their use is futile. Because they are trained on our own production, they will be strikingly similar to the way we write and talk. And because the human brain is plastic and no one can stop time, what you flag as AI may have been real, or could be real in the future.

Forget about the detectors. What matters is not what is said, but what it tries to do to its audience. That influence factor, however, can be approached. But we will save that conversation and try to build it instead at Exalea.

FIN

Author's Note:

As this is the first publication from our pro-EU company Exalea Sp. z o.o., I want to be clear: our goal is not to tear down specific businesses, but to dismantle the illusions holding our industry back. As Shakespeare said: "There is nothing either good or bad, but thinking makes it so." If you are wondering where Exalea stands on the reality of "AI" and the future of our digital landscape, I hope this perspective provides the answer.

Sincerely,

Benjamin Delhomme

Founder of Exalea Sp. z o.o.

Footnotes

-

This paragraph was later edited for readability, but the exact draft used for the test was:

↩From the claim of "99% accuracy" to an oddly satisfying speedometer-style red gauge flagging "100% confidence" that a text contains AI-generated content, and above the satisfaction of displaying a score to a population raised on grades, the appeal is real and fulfills the desire for uniqueness we all crave; tales of old that do not want to let go, yet they have to.